openpilot 0.11

The first robotics agent fully trained in a learned simulation

This release marks the start of a new learning paradigm, one that relies solely on neural networks, from planning to simulating driving videos.

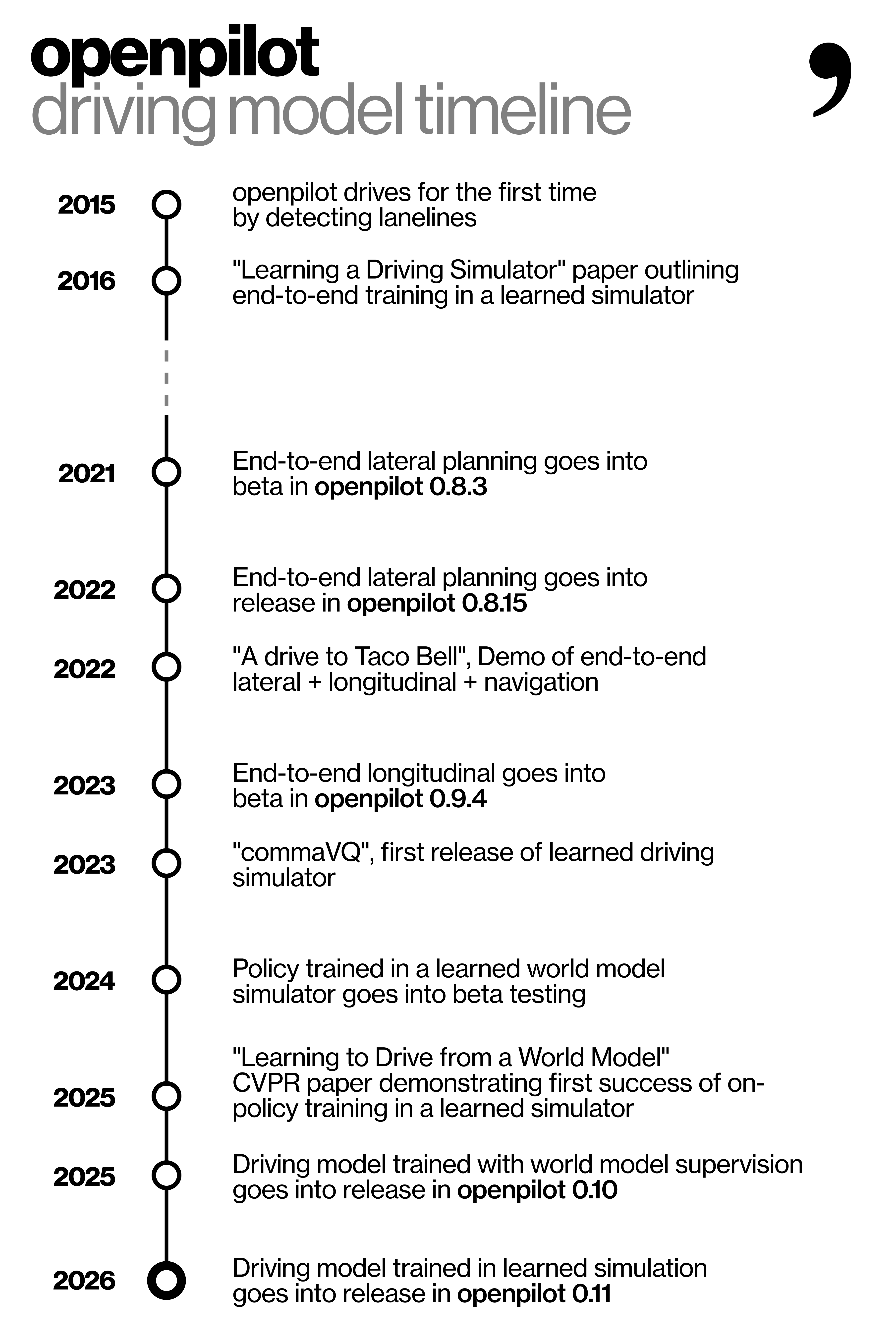

Timeline of progress towards end-to-end learning in learned simulator for openpilot’s driving models

This is the result of a decade-long project started at comma in 2016, when we first described this idea in our paper “Learning a Driving Simulator”. In 2021, we started shipping models that were trained end-to-end and did not reference lanelines or any hand-coded features. These models were trained on-policy in classical simulation. In 2023, we released our first transformer-based driving simulator in commaVQ, a.k.a. a World Model. In 2025, with 0.10, we released our first driving model trained using a World Model for planning, which replaced the hand-coded MPCs that were used prior, but still used a reprojective simulator for videos. Today, we are releasing our first driving model trained using both videos and plans generated from a World Model. To the best of our knowledge, this is the first real-world robotics agent shipped to real users that was fully trained in a learned simulation.

In addition to the new driving model and simulator, this release comes with a more polished interface for comma four. And a whopping 77% reduction in idle power draw. We think you are going to love this release.

New driving model

Left: a simulated video generated by the commaVQ world model. Right: a simulated video generated by the world model at the time of the release. Top: narrow field of view frame. Bottom: wide field of view frame. Note that the commaVQ world model only generated the wide field of view frame, which explains the missing top left frame. The new simulator produces significantly better image quality, temporal consistency, and reduced temporal artifacts such as flickering.

Autonomous driving systems generally rely on one or a mix of these approaches:

- Train a neural network on a large corpus of labeled data using labelers and/or other specialized neural networks

- Train a neural network on a set of carefully curated examples from the fleet showing rare, informative cases

- Train a neural network on a set of examples generated using classical hand-coded simulators

In this release, the WMI model 🍉 (#36798) is trained in a simple two-step approach:

- Train a large neural network simulator, a.k.a. a World Model, on a large corpus of unlabeled data collected from the fleet

- Train a small neural network, a.k.a. driving policy, on data generated by its interactions with the World Model

Why is this significant?

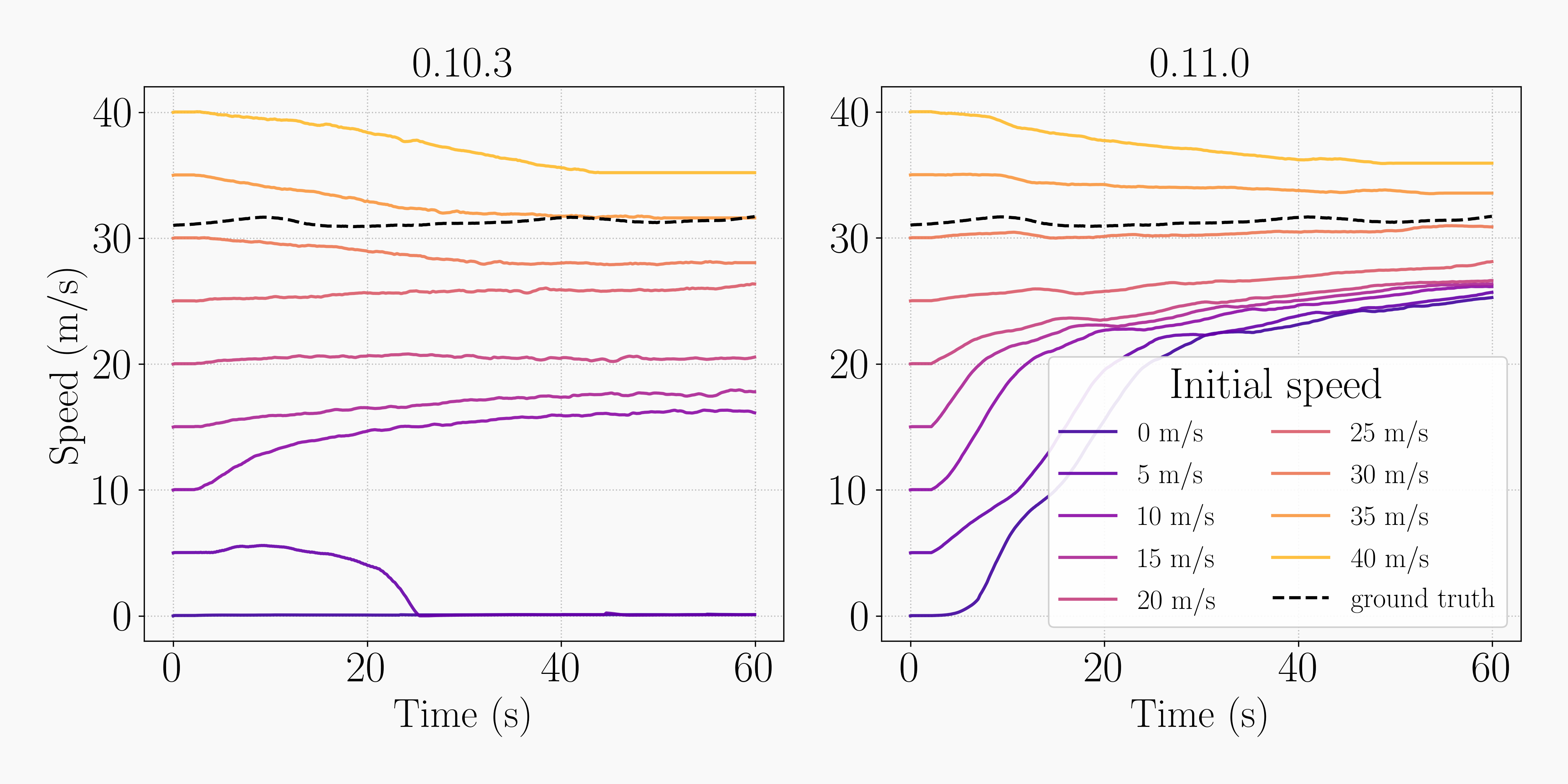

The classical simulator used to train the driving models of previous releases could only simulate small deviations in longitudinal policy. This meant it was difficult to show examples to the driving model during training of slowing down or speeding up to a desirable speed. As a result, the driving models did not reliably drive at reasonable speeds on the highway.

The new model starting from a stop on the highway.

Speed convergence test: we initialize the simulation in an empty highway scene at different initial speeds and let the model converge to its desired speed. The new model is much more confident at selecting an appropriate speed on the highway than previously released models.

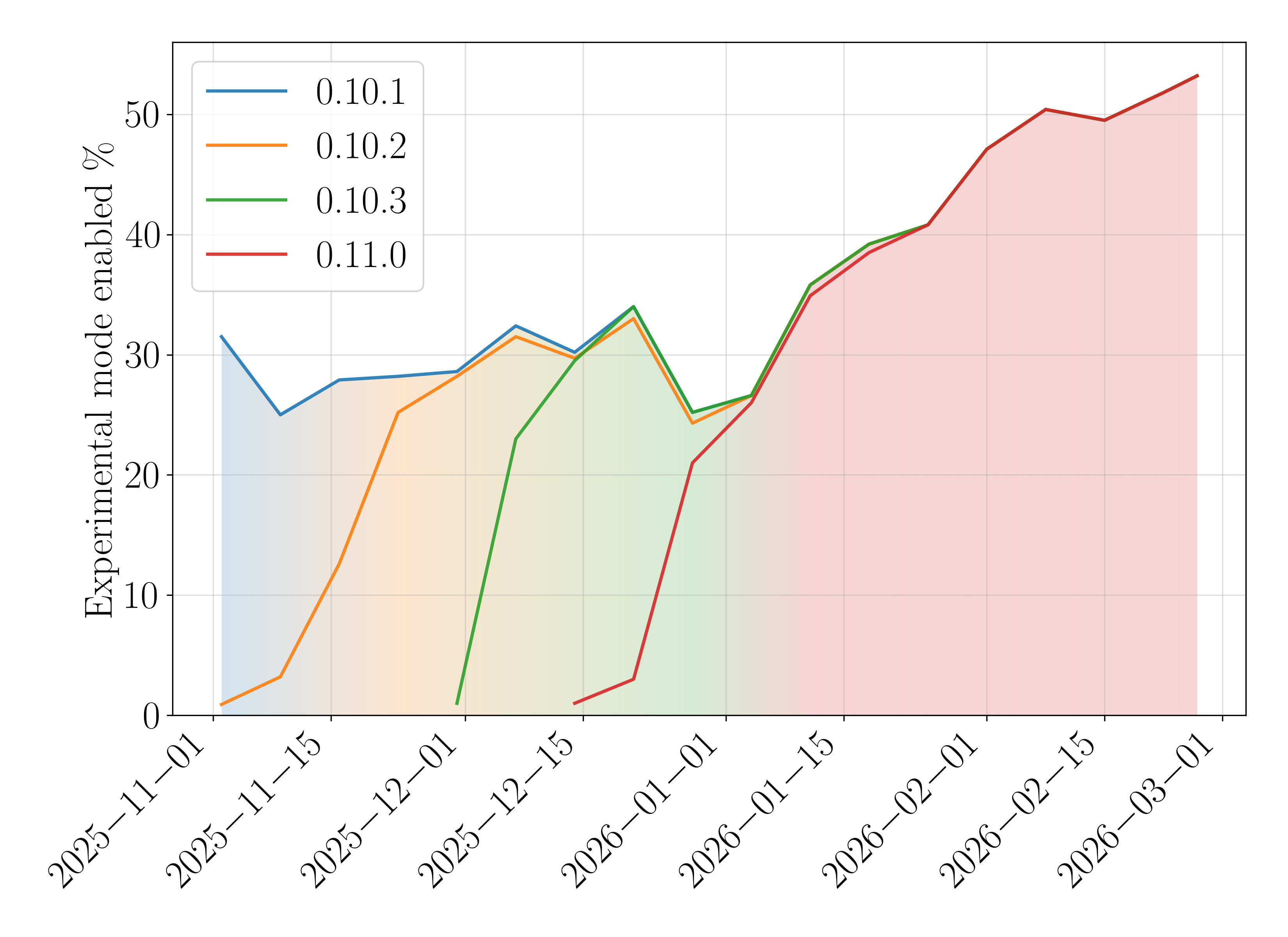

This poor speed convergence is improved in this release by using the learned simulator. We merged the new model into openpilot nightly on January 19th. You can see in our usage data that users on nightly now prefer Experimental mode (end-to-end longitudinal policy) over openpilot’s ACC policy.

Percentage of segments with Experimental mode enabled for platforms supporting Experimental mode by version. 0.11.0 is our best performing version yet for Experimental mode usage.

The new model has noticeably improved reactivity around parked cars.

On top of the longitudinal simulation range issue, training driving models using hand-coded simulators is tricky because the policy will learn to cheat using artifacts of the simulator. The only way to avoid this is to either (i) improve the simulator with more hand-coded rules or (ii) put bottlenecks on the policy’s representations. As a result of this, improving the policy came at the risk of cheating, which limited model improvements we could do. We have described this cheating behavior in more detail in a previous blog post.

With neural network simulators, fidelity can be improved with more computation and more data, and as long as the policy stays in the realm of “The Big World Hypothesis” i.e. is smaller than the simulator, it won’t cheat. For those reasons we expect many improvements to the driving quality to follow very quickly.

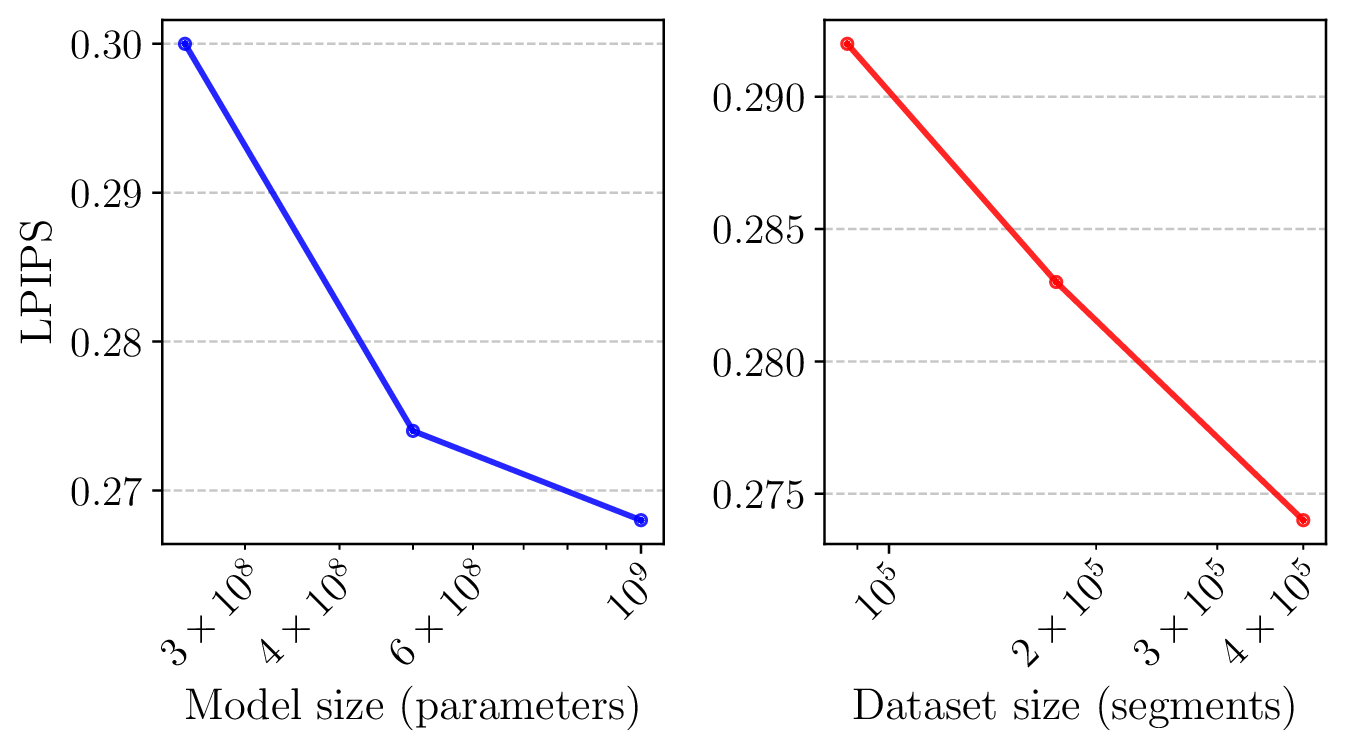

Quality of images generated by the World Model measured by the Learned Perceptual Image Patch Similarity (LPIPS) gets better with more data and more parameters.

Left: Reprojective Simulation, Right: World Model Simulation. We command a 1m deviation to the right (top half) or to the left (bottom half), then let the model recover after the deviation is achieved. Notice the accuracy of the World Model Simulation in achieving the deviation compared to the (perfect) Reprojective Simulation, and the consistency between the narrow field of view frame (top) and the wide field of view frame (bottom).

The driving policy gets better at recovering from noise with more training epochs. Top is the narrow field of view frame, bottom is the wide field of view frame.

World Model

We describe the World Model and the driving policy training strategy in detail in our Learning to Drive from a World Model CVPR 2025 paper. But the TL;DR is:

A World Model is a learned model of an environment’s dynamics: given the current observation and an action, it predicts what will happen next. For driving, the World Model is given a context of frames and a steering/acceleration action and predicts the next frame as well as future actions. In this sense, the World Model is a driving simulator learned from data instead of hand-coded using 3D geometry rules. Our version of the World Model is also conditioned with future frames, making it an “Inverse Dynamics Model” in addition to a World Model.

Examples of simulation rollouts using the World Model.

For the curious readers, we provide more technical details about how the models evolved since the first version of the architecture.

Technical Details

Frame Compressor

The Compressor is a frame encoder/decoder Transformer (ViT), the encoder has 50M parameters, while the decoder has 100M (2✕ deeper). It encodes 2 camera views (narrow and wide) of size 3✕128✕256 each into a single latent space of size 32✕16✕32. It is trained with a mix of image quality losses: Learned Perceptual Image Patch Similarity (LPIPS), adversarial loss, and least squares error.

We adopt the Masked Auto Encoder (MAE) formulation where the input to the encoder has randomly masked patches. The compressor learns to unmask the image, promoting better modelability.

These improvements to the compressor were released in the 0.10.1 version

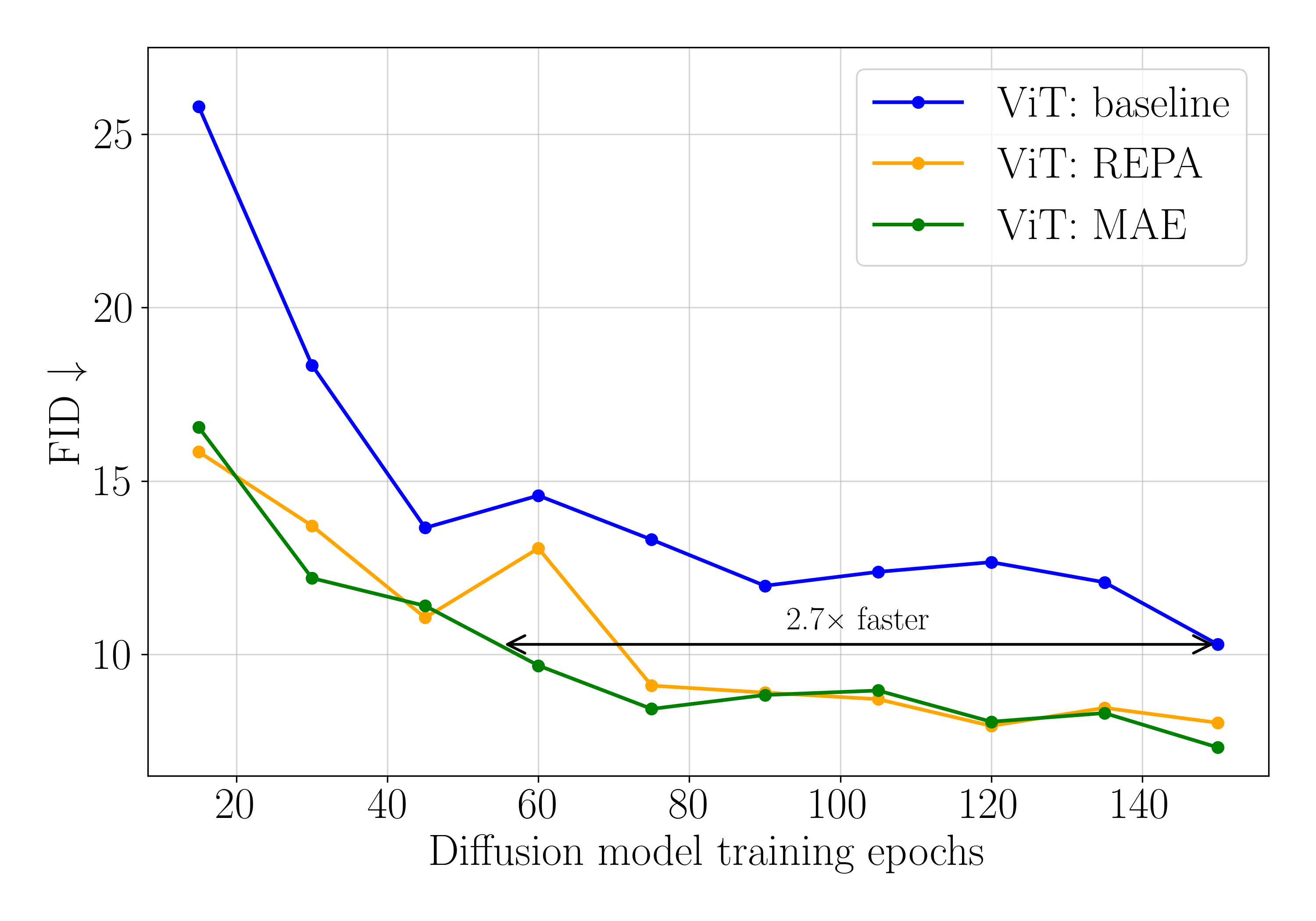

We train a small Image Diffusion Model using various compressors to evaluate their modelability. The Masked Auto Encoder formulation is 2.7✕ better than the baseline, and comparable to representation-alignment (REPA) while not needing an additional loss.

Diffusion Transformer

The Diffusion Transformer is a 2B parameter transformer (n_layer=48, n_head=25, n_embd=1600) adapted to 3 dimensional data. Each sample is comprised of 2 seconds of past context, 1 second of future conditioning, and a 0...7 seconds of simulation window all sampled at 5 frames per second

The actions (6DOF relative positions), the diffusion noise level, and the frame indices within the sample are encoded and injected into the transformer using AdaLN-single (introduced in PixArt-α).

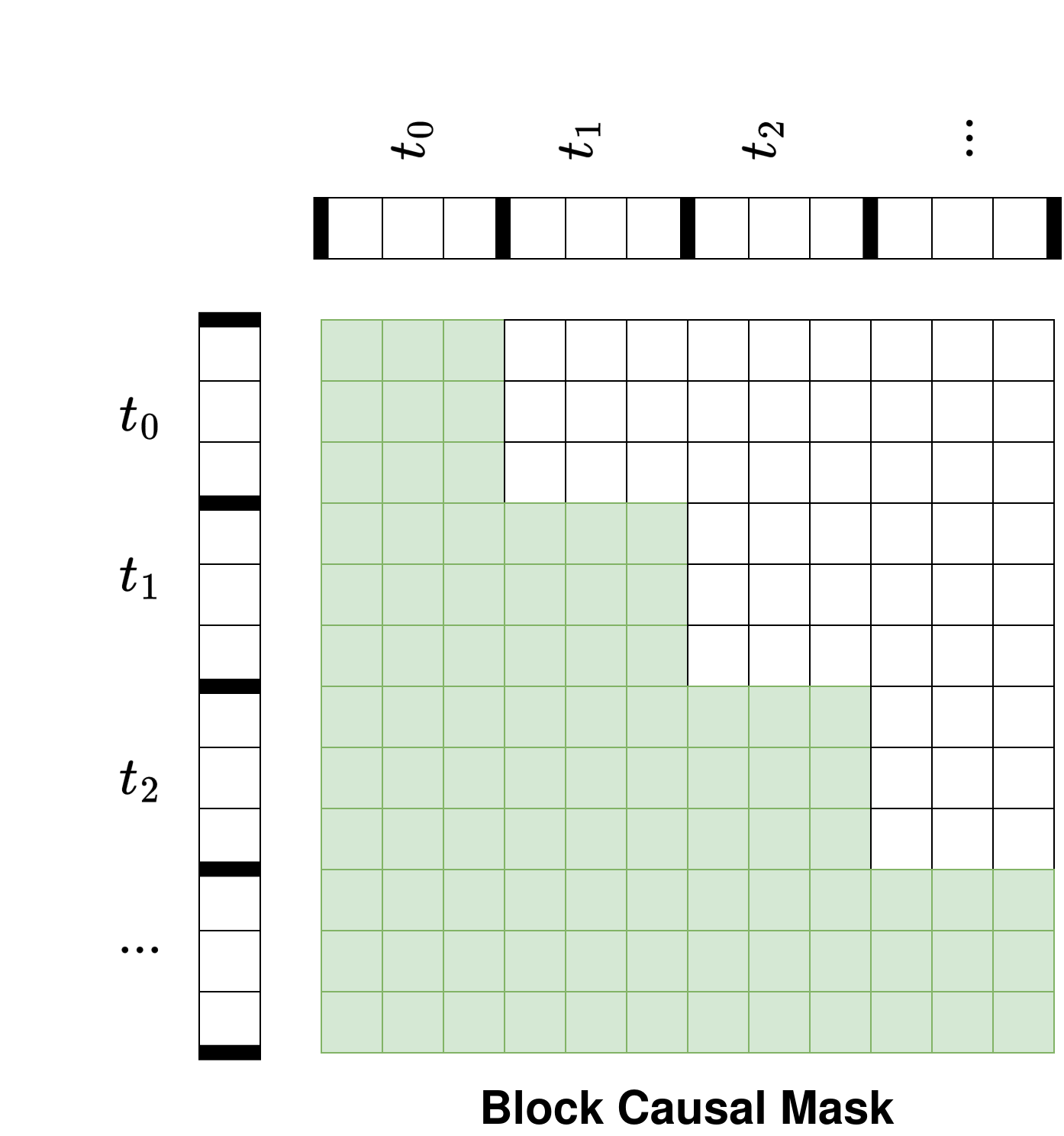

The Transformer is block-causal: all attention layers are equipped with a block-causal mask, allowing tokens to attend to tokens from their frame and past frames, but not future frames.

The block-causal attention masking.

The diffusion model is trained on 2.5M minutes of driving video with the Rectified Flow formulation with logit normal noise sampling. We also augment the noise level input to the model to make it more robust to accumulated errors in the auto-regressive rollout process.

Sampling is done frame by frame using 15 Euler steps, with Classifier-Free Guidance (CFG) with a strength of 2.0. Since the transformer is block-causal, we are able to use KV-caching, and achieve a 12.2 frames/sec/gpu throughput.

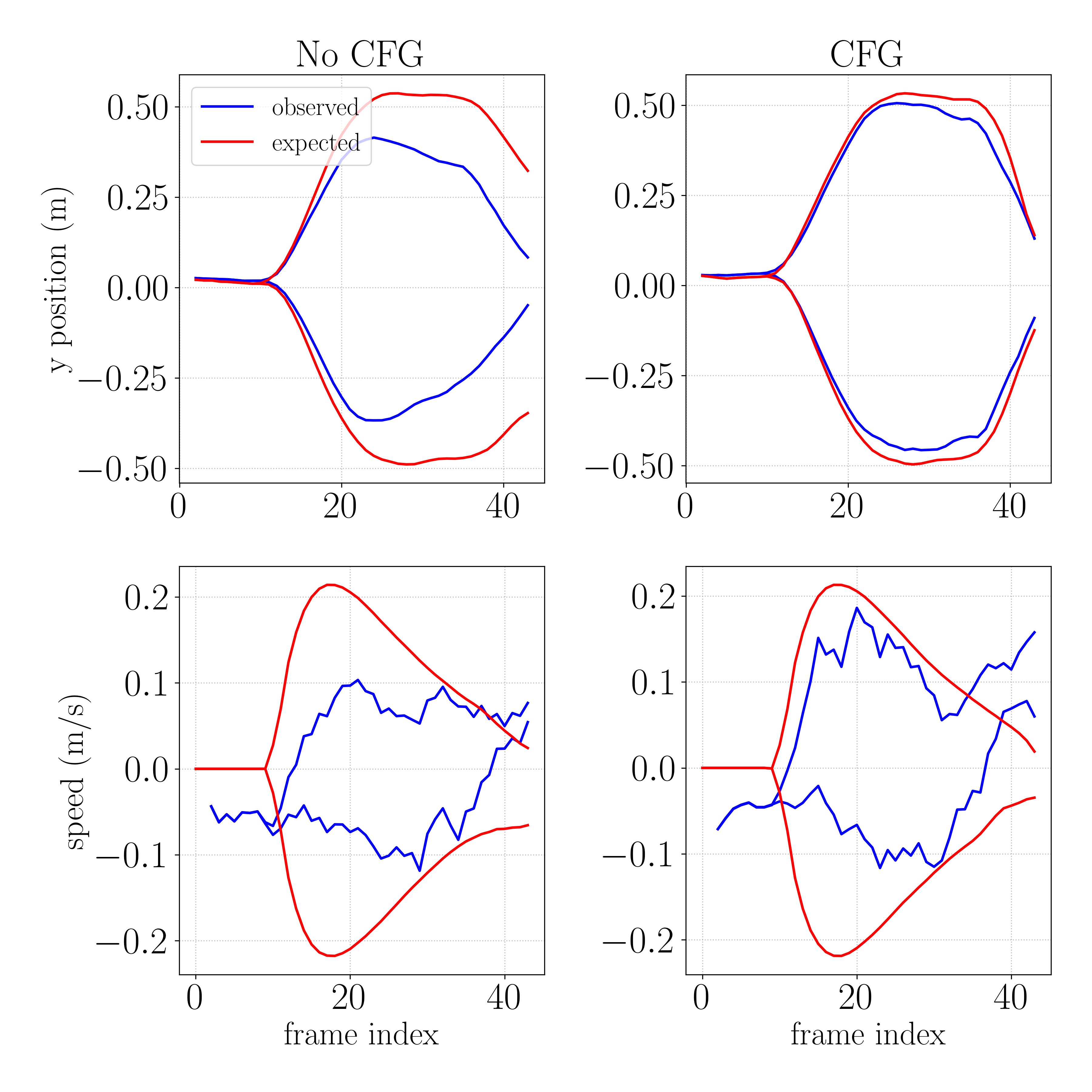

For the first half of the rollout, we command a deviation (top: left/right lateral deviation, bottom: acceleration/deceleration). We use a pose net to compare the observed deviation (blue) with the expected deviation (red). Using CFG (right) significantly improves the model’s action following compared to not using CFG (left).

Driving Policy

The off-policy model is a FastViT trained to predict a variety of outputs, including lane lines, road edges, lead car information, etc. These outputs are only used for visualization and not used as part of the end to end policy (besides the lead car fallback).

The on-policy model is a small transformer with 2 seconds of context at 5 frames per second. It is trained to predict the actions suggested by the world model given a simulation rollout generated by the world model. The rollouts are generated using the world model and the latest policy checkpoint saved by the learning process, which makes this strategy "near on-policy."

During the rollouts, the policy is subjected to various types of action and observation noise, which is essential to making it robust in the real world.

Lateral Noise: lag, lag compensation mismatch, lateral acceleration, steering

Longitudinal Noise: lag, lag compensation mismatch, pitch, gas/brake

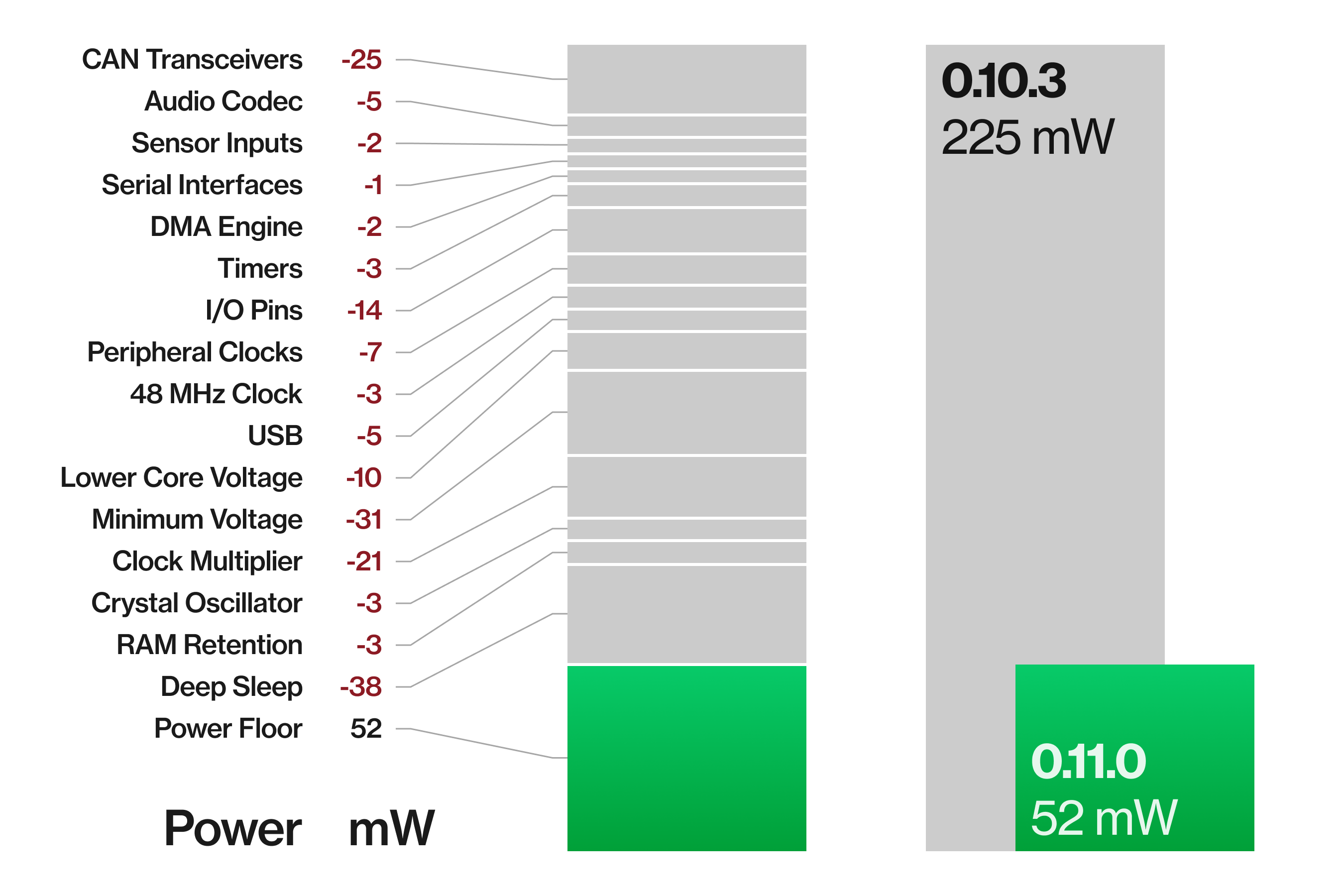

comma four power saving

When comma four is parked, the panda microcontroller stays awake to detect ignition and boot the main SOC back up. Previously, this idle state drew around 225 mW from the car’s 12V battery. In this release, we cut it down by 77% to 52 mW (panda#2340).

Parasitic draws above 600 mW are generally considered worth investigating. The previous 225 mW were a real chunk of the car’s key-off power budget. Now at 52 mW, that’s the difference between a dead battery in weeks vs months.

| Power | Current | vs. car idle | |

|---|---|---|---|

| Typical car (key-off) | 250–600 mW | 20–50 mA | |

| + comma four (previous) | 225 mW | 19 mA | 38–95% |

| + comma four (0.11) | 52 mW | 4.2 mA | 8–21% |

| BMW diagnostic threshold | 600 mW | 50 mA | 100–240% |

panda power reduction

The panda STM32H7 MCU on comma four runs at 240 MHz on VOS1 voltage scaling. With all peripheral clocks enabled it draws 225 mW in power save state. We reduced this in three stages, each measured individually on comma four hardware:

Disable unused peripherals. CAN transceivers, audio, ADC, SPI, UART, DMA, timers, GPIO config, USB. Each of these saves a few mW individually. Together they reduce the power from 225 mW to 158 mW.

Drop voltage scaling. From VOS1 (high performance) to VOS3 (low power). This setting reduces the core voltage and clock frequency to save power. Listening for ignition, the lowest power mode is sufficient and saves 41 mW.

Enter stop mode. The most pronounced saving comes from disabling the PLL, HSE, and SRAM retention, then entering a deep sleep state called “stop mode”. The CPU halts entirely. The EXTI interrupt lines remain active: CAN RX for CAN ignition and both SBU pins for HW ignition. This brings idle power to 52 mW.

UI refinement

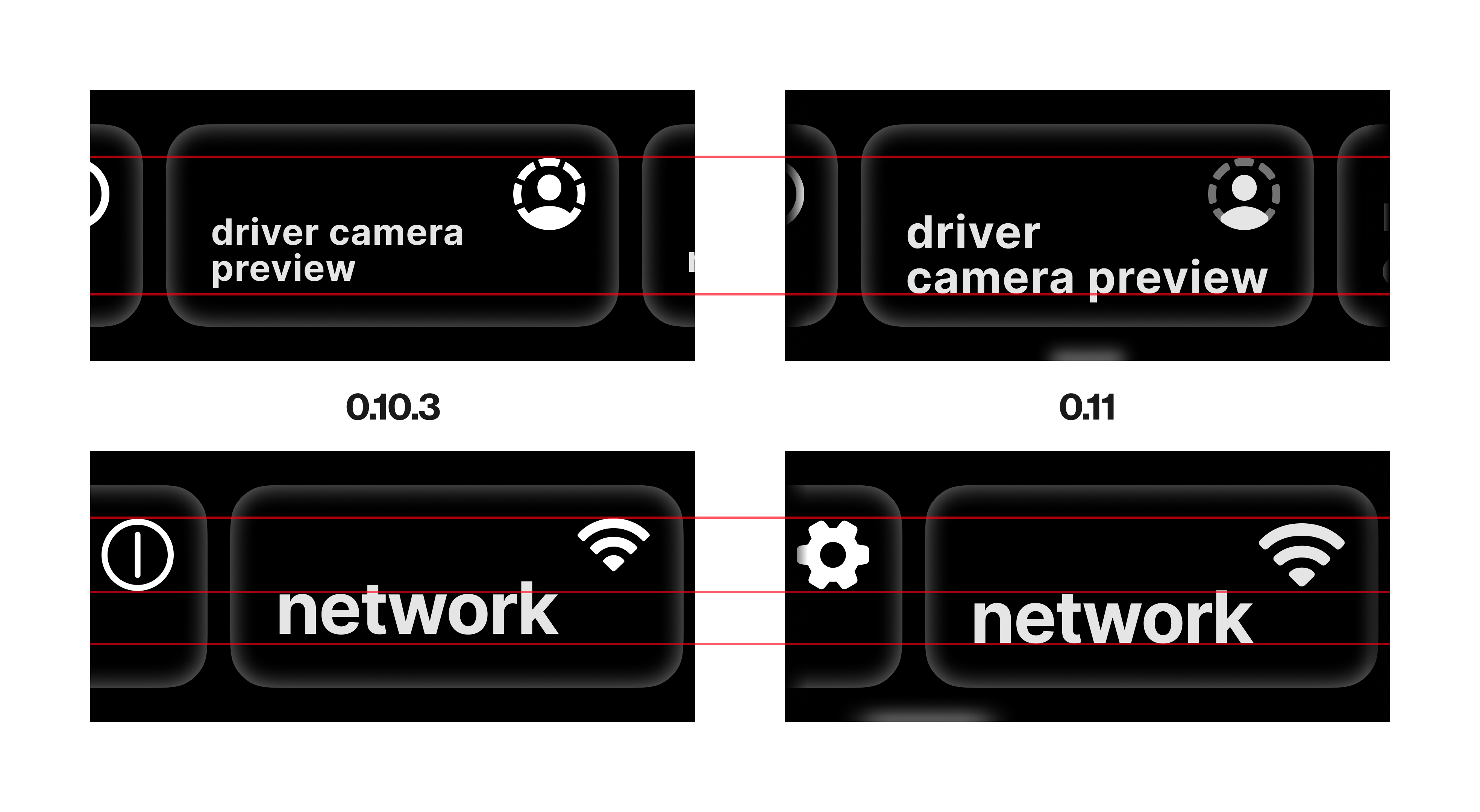

With comma four, we introduced a whole new design language to translate the big-screen experience on the comma 3X to a new compact form factor. This release tightens up that interface with a handful of improvements, large and small.

Setup & Onboarding rework

At launch, the setup and onboarding flows used vertical scrolling pages with their own navigation buttons—inconsistent with the rest of the UI. Now, both use horizontal scrollers with informational cards, matching the regular settings menus (#37264).

First-time user setup flow

Onboarding flow before first drive

Wi-Fi menu rework

The Wi-Fi menu has been totally redesigned (#37152). It’s not only simpler to use with one less sub-menu, but it matches the rest of the system visually and functionally.

Real navigation stack

We now draw a stack of widgets instead of just the active one, so you can see where you are in the UI while swiping back (#37275).

Old: only top page was drawn

New: background pages are drawn

Steering arc for angle control cars

The steering arc visualizes how close the car is to its lateral actuation limits. Torque control cars are straightforward to visualize since openpilot knows the current and maximum torque it can apply. Angle control cars just command a steering angle, where maximum can change depending on speed, so there’s no direct percentage.

This release adds steering arc support for angle cars by estimating torque from the current lateral acceleration achieved vs. the maximum available to the car (#37078).

Ford Bronco Sport with steering arc display

The little bits

- Factory reset (#37584) and updater (#36235) UI updated with the standardized horizontal layout

- New scroll indicator that gently compresses and bounces back (#37162)

- Shimmer effect on sliders, hinting at the slide direction (#37640)

- Anti-aliased text and overhauled icons for a more refined look

- Opacity falloff on the edges of horizontal scrollers (#37239)

- Button labels realigned for a better fit

Cars

Testing overhaul

We replaced opendbc’s third-party testing infrastructure with stdlib unittest and a few hundred lines of code.

mull, the LLVM-based mutation testing framework we used to verify our safety tests, required specific clang versions and took 45 minutes to run. We replaced it with a custom mutation test runner (opendbc#3130) that runs in 30 seconds.

The safety tests also got faster. Since safety checks are threshold comparisons, we only need to test around the boundaries rather than exhaustively. This cut safety test time by 31% (opendbc#3200).

We removed pytest (opendbc#3191), scons (opendbc#3194), and four other dependencies (opendbc#3186, opendbc#3187, opendbc#3188, opendbc#3190). The best dependencies are the ones you delete.

Car Ports

- Acura TLX 2025 CAN FD support thanks to MVL! (opendbc#3037)

- Honda 10G Civic manual transmission support thanks to MVL! (opendbc#3025)

- Lexus LS 500 2018 support thanks to Hacheoy! (opendbc#3010)

- Kia K7 2017 support thanks to royjr! (opendbc#3123)

Enhancements

- Toyota: improve lateral control by migrating RAV4 TSS2 and SecOC platforms to torque control (opendbc#3091, opendbc#3086)

- Toyota: add stop and go to Lexus LS, update torque data thanks to Hacheoy! (opendbc#3080)

- GM: improved Silverado lateral tuning thanks to firestar5683! (opendbc#3106, opendbc#3110)

Join the community

openpilot is built by comma together with the openpilot community. Get involved in the community to push the driving experience forward.

- Join the community Discord

- Post your driving feedback in

#driving-feedbackon Discord - Upload your driving data with Firehose Mode

- Get started with openpilot development

Want to build the next release with us? We’re hiring.

Leave a comment