openpilot 0.8.15

This is the most important openpilot release yet. In this release, we are taking laneless mode out of alpha and making it the default policy.

It has been comma’s goal since the beginning to teach machines how to drive by observing humans driving, and this release is the manifestation of that dream. Some of you have tried previous versions of laneless mode, but for the majority of our users, this will be the first time you’ll experience how openpilot has learned to drive by watching you.

Of course, there’s a myriad of other improvements in this release too that we hope you’ll enjoy!

Laneless Mode as Default

In laneless mode, openpilot plans where to drive end-to-end. With that, we mean it looks at the video stream coming from the cameras and outputs where in 3D space it wants to be. It does this by trying to predict where humans would drive during training in a simulation environment that is based on real data. You can learn more about this here, here, and here.

This means the driving model was never explicitly told about lanelines, curves, intersections, lane changes, or any other traffic rules. It infers everything from how humans tend to behave in similar scenarios. If people are who they surround themselves with, then openpilot’s driving model is who it has seen drive. And so in the truest sense, openpilot contains a little piece of each and every one of you!

Some laneless driving in Joshua Tree National Park

Previous releases have relied on this end-to-end behavior to navigate situations with unclear or no lanelines, but with this release, laneline positions are not used at all in any openpilot logic. For now, all of this only applies to lateral (steering) control of openpilot, however similar things are being worked on for longitudinal (gas/brake).

This release contains two improvements to laneless behavior. The lateral planner costs have been updated to reduce ping-pong, and this release includes a new driving model that was trained using an improved simulator. The new model is more confident in driving in a reasonable place in the lane. Combined, we think those improvements make laneless good enough to be active 100% of the time.

What does this mean for you? The biggest improvement you will notice, if you have not tried laneless mode before, is the more natural and comfortable way it enters and exits turns. Additionally it drives more naturally around intersections, exits, merges, and other more nuanced scenarios.

Torque Controller

We introduce a new controller that controls the steering wheel to turn the car. By using a better understanding of vehicle modeling and tire dynamics, we can do more accurate torque feedforward and more sensible feedback gains.

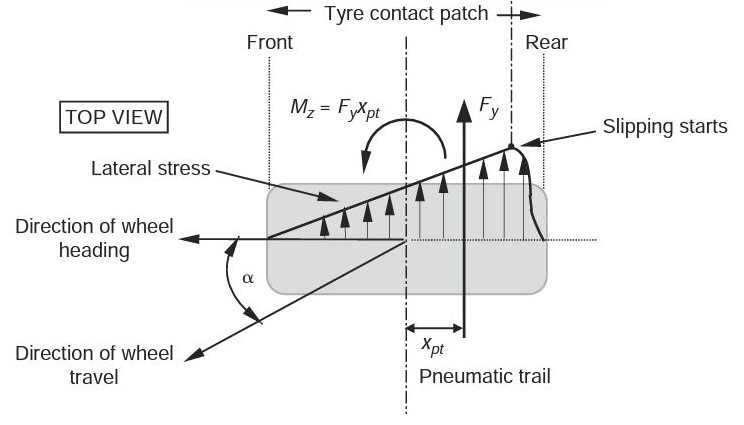

Top-down view of a tire

In the force diagram of the top-down view of the tire you can see two main quantities, the lateral force Fy and the pneumatic trail Xpt. Fy is the lateral force acting upon the tires, which we can assume to be equal to lateral acceleration of the vehicle plus any gravitational acceleration the vehicle is on a banked road. The pneumatic trail, Xpt, is the point on the tire upon which that force acts. In this diagram you can see the torque around the tire is just the product of those 2 quantities. The pneumatic trail can be assumed to be constant for a certain vehicle configuration, across all speeds.

This means the torque on the tire correlates linearly with the sum of lateral acceleration from the turn and lateral acceleration due to gravity (on banked roads). The torque on the steering wheel depends on this torque and a few more factors such as mechanical trail and steering ratio, which are also mostly constant for a certain vehicle.

What this all means is that to get an accurate prediction of how much torque needs to be applied to the steering wheel, we just need to know the single linear factor that converts desired lateral acceleration and road roll into desired steering wheel torque. The key revelation here is that steering torque does not depend on speed, steering wheel angle, or tire slip, just lateral acceleration.

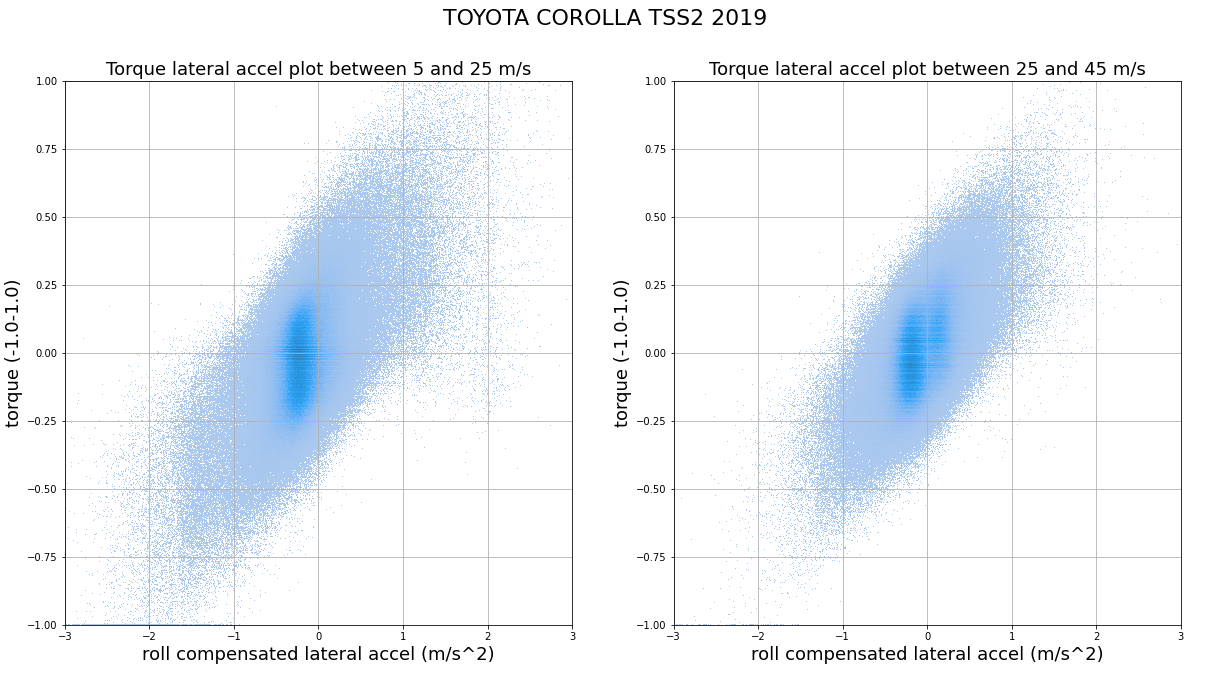

Density plot of requested torque vs roll compensated lateral acceleration for TSS2 Toyota Corolla. Notice the similarity for different speed ranges. The hysteresis shape is due to the friction in the steering rack.

To estimate these parameters, we do some optimization across recently collected data for cars in our fleet. We also estimate a value that represents the friction in steering rack, this is the torque needed to overcome the static friction of the steering. These values are then used to calculate a sensible feedforward. To compensate for the remaining errors we use some PI feedback in the lateral acceleration domain. The resulting torque controller, should give smoother and more accurate control for all vehicles that have a torque interface, while also not needing any car-specific tuning values.

Torque control is implemented for the TSSP Toyota Prius, Toyota Corolla, and some Hyundai/Kia models, for which it enables improved lateral control. In future releases the remaining cars will be changed to use this controller.

This lateral controller is implemented in a short and readable file at selfdrive/controls/lib/latcontrol_torque.py.

Full-frame Driver Monitoring

Until now, the driver monitoring (DM) model had to be run on a tight crop of the full interior image, even with the comma three’s wide-view driver facing camera. This compromise was due to both the comma two and the comma three sharing the same DM model, which was bottle-necked by the limited field of view of the comma two’s driver camera. The lack of field of view that the model sees not only confines device mounting to a narrow window but also fundamentally limits the range and quality of training data that the model is exposed to.

Old ‘wide’ model box (yellow) vs New fullframe DM model box (green)

With the deprecation of the comma two as well as the influx of a variety of new comma three data, we are able to migrate the whole DM ground-truthing stack to run on the full interior images of the comma three driver camera. Training data quality improved without the driver face being cut out 5% of the time. Also, the same ground truth can be generated on the passenger side, increasing the amount of unique faces to learn from.

The new model is trained to predict the states for both the driver side and the passenger side, one of which is chosen to perform driver monitoring based on which side the driver is on. This is currently still manually determined by the “Enable Right-Hand Drive” toggle, but the new model is already shipped with the ability to tell which side the steering wheel (aka driver) is on. Once we confirm its reliability from large scale fleet data, the toggle will be removed.

Model figuring out which side driver is on

In addition to being able to see the steering wheel, the model now also sees a lot more context of the driver, including the driver’s posture, whether they are holding something in hand, etc. In the works is training a model to make end-to-end DM predictions, which relies on the model’s understanding of the whole scene instead of specific driver attributes. We believe it will greatly benefit from the extra information using the full frame.

To accommodate the bigger input size, YUV sampling is moved into the DSP and the SNPE runner now uses 8-bit input buffers to eliminate making unnecessary copies, which accounted for a significant portion of model power usage.

Navigation improvements

Speed Limits

In this release our navigation daemon “navd” has been rewritten from C++ to Python. We no longer rely on the parsing code in the Qt Location plugin, which exposes a common routing API for different mapping providers. However, this doesn’t allow for easy parsing of Mapbox-specific annotations, such as speed limits. By rewriting the code in Python the code is not only simpler, but also allows us to extract more information about the current route. This rewrite was made possible by the refactor done in 0.8.14 to separate the map viewing code from the actual routing.

Since speed limits are part of the response from the routing API, speed limits are only available while navigating.

If you notice missing (or incorrect) speed limits on your drives, it’s possible to submit corrections. This can be done to Mapbox, directly to Open Street Map using RapiD, or any of the other editors.

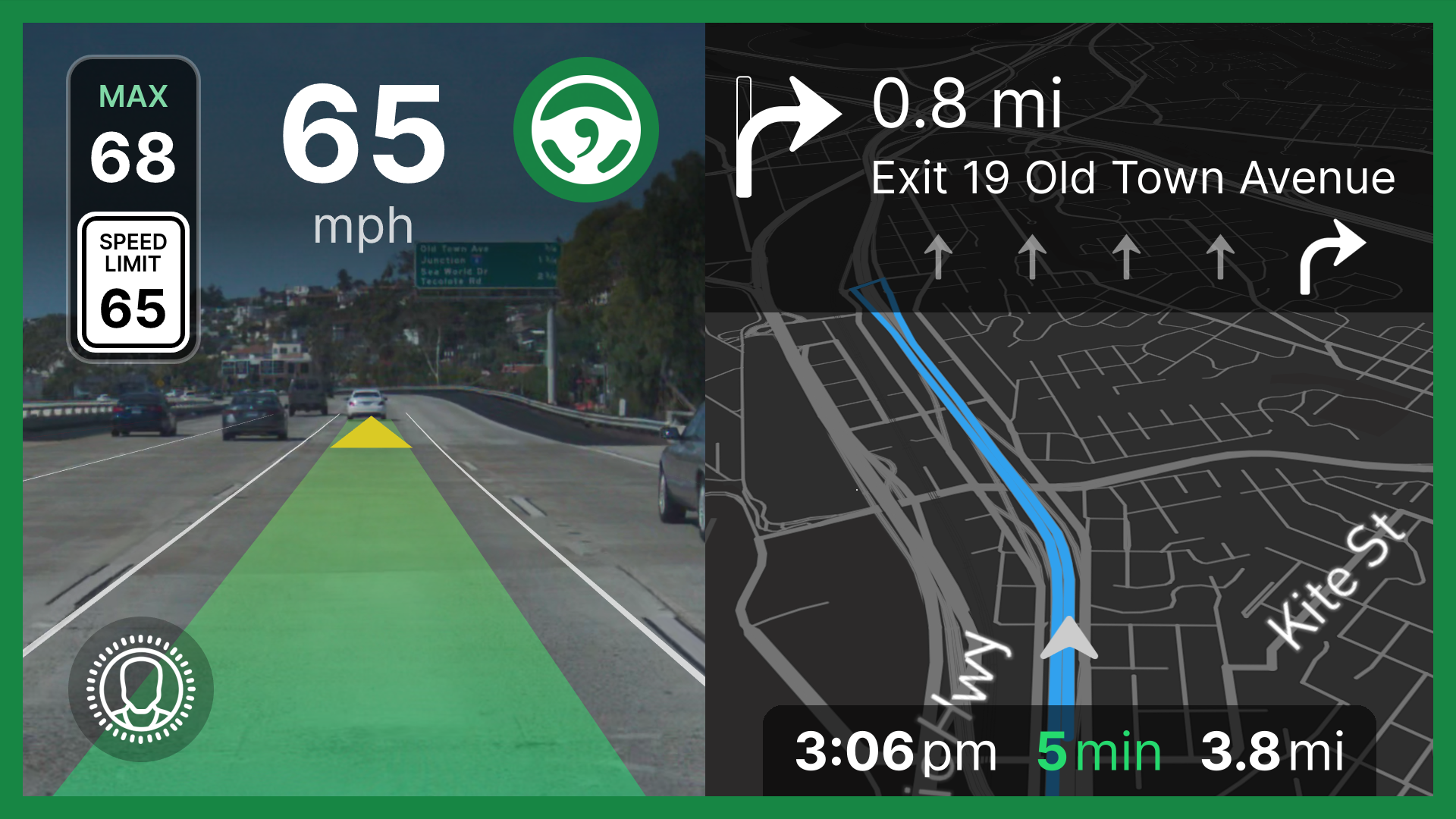

UI showing the current speed limit while navigating

Faster position fix

This release includes a brand new daemon called laikad. Currently, it reduces the initial “Waiting for GPS” time on startup. Read more about laikad in its upcoming blog post.

UI updates

Along with multilanguage, we’ve shipped some updates to the onroad UI. The font has been moved to Inter from OpenSans (#24937) to match offroad. The set speed box has been refreshed and now shows the speed limit as well (#24736). The road camera view now uses the camera calibration to center the vanishing point for a more consistent appearance across various cars (#24955).

Multilanguage

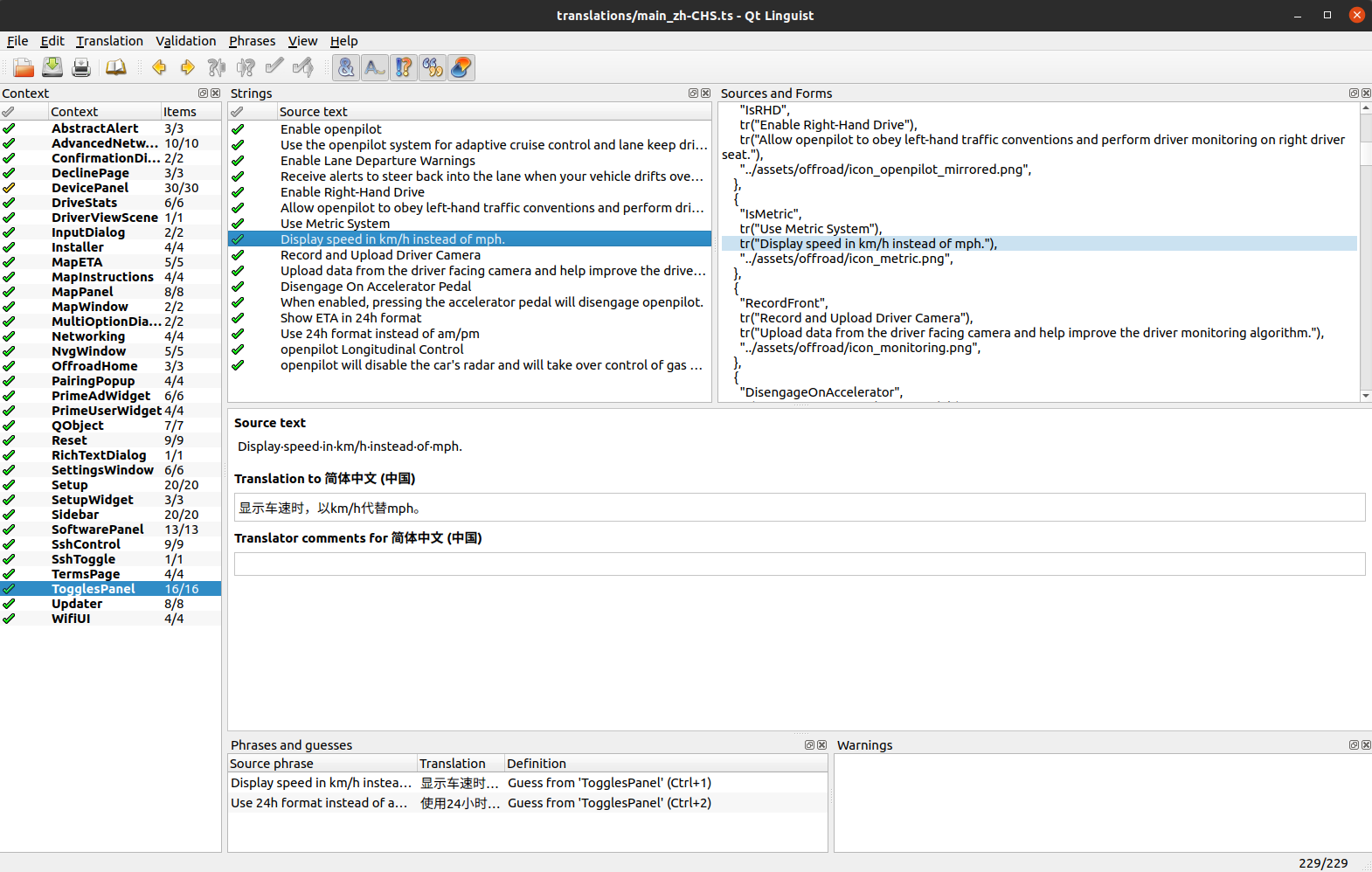

Kicking off openpilot internationalization is multilanguage support within the offroad UI. This release supports three new languages: Chinese (Simplified), Chinese (Traditional), and Korean. In the future, multilanguage support will expand to the rest of the UI, including the initial onboarding, onroad alerts, and Navigation instructions.

UI showing offroad home screen with Chinese (Simplified) translations

How to Contribute

Adding new languages or fixing existing translations is as easy as opening a pull request to openpilot. Translations live in selfdrive/ui/translations, which also has a README.md to help contributors get started translating using Qt Linguist.

Qt Linguist is a tool contributors can use to easily add new languages and edit translations

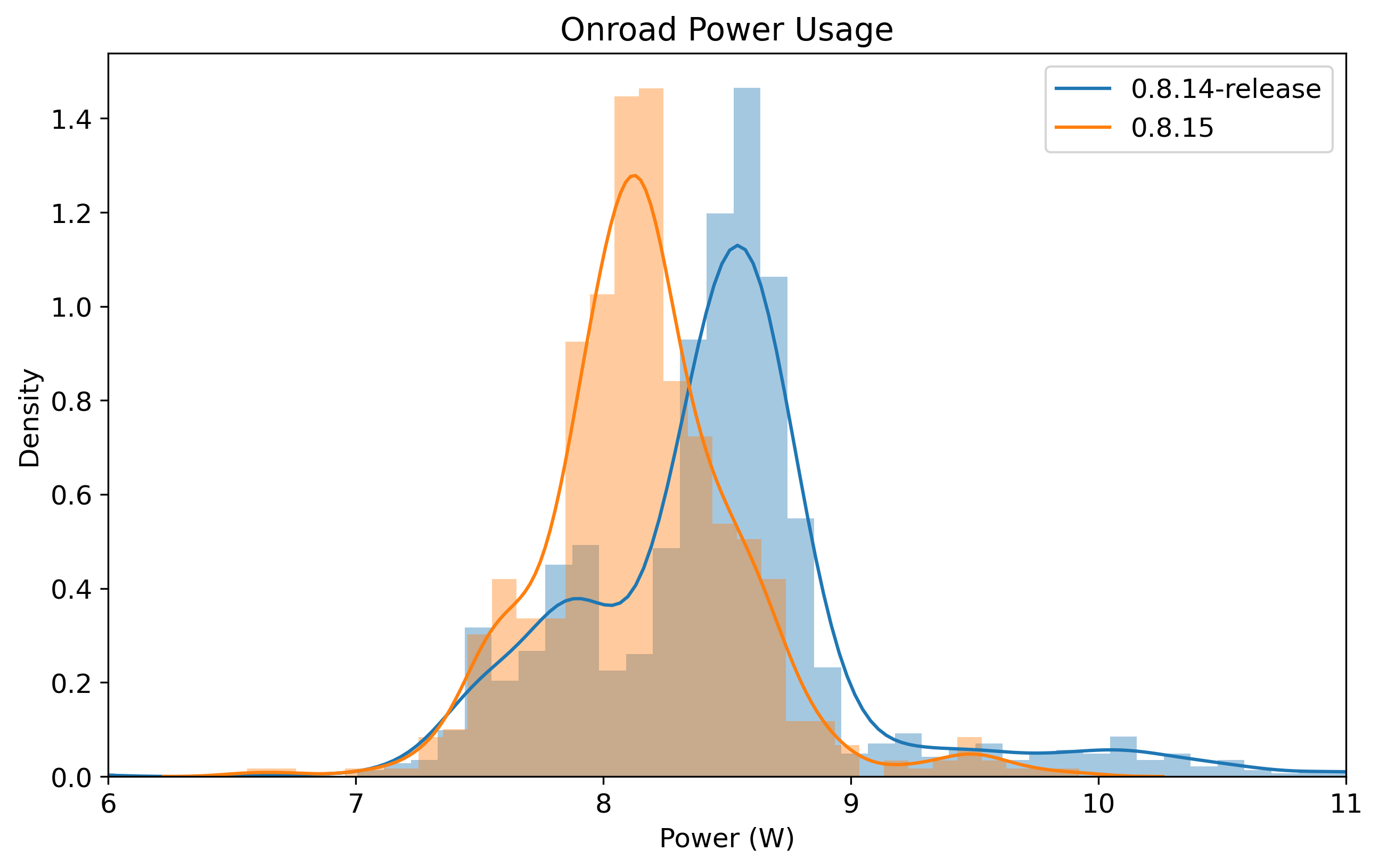

Power Reduction

Most of the onroad power savings have been realized by switching camerad and all consumers to a single video format, NV12. Before this change the raw bayered images from the camera were demosaiced into RGB, and then converted into YUV420. The RGB stream was consumed by the UI, while modeld and loggerd used the YUV420 stream.

However, this resulted in many copies of the full 1928x1208 picture data (@20fps times 3 cameras). A copy was made to convert from RGB to YUV in camerad after debayering, the UI made a copy into a texture, and loggerd also made a useless copy when encoding to H265. By getting rid of the RGB stream and switching the YUV stream to NV12, we were able to remove all these copies. The debayering goes straight into NV12 eliminating writing out an RGB copy of the frame into RAM.

The UI now uses the EGL_LINUX_DMA_BUF_EXT EGL extension, which allows constructing a texture using a pointer into an ION buffer (CPU and GPU memory are shared on the 845). The lack of copy also reduces latency, leading to a more stable and smoother camera view in the UI. The 845’s hardware H265 encoder also has support for NV12, allowing compression of the video without requiring the intermediate copy to go from YUV420 to NV12 (#24519).

Removing all these copies reduced RAM bandwidth, which has a direct impact on power usage.

Distribution of power usage from the fleet over the last two weeks, on the previous release and master

AGNOS 5

Delta updates

openpilot now supports delta updates for future AGNOS updates. This reduces the amount of data that needs to be downloaded by reusing bits and pieces from the previous update. We use the casync CLI to split up the old and the new update into chunks and compress them. On the device side, we built a simple Python implementation to determine which chunks can be reused from the previous update, and which have to be downloaded from the server.

A typical AGNOS update is about 650 MB, using casync reduces this to about 270MB. Another benefit is that it’s now trivial to resume an update if the device reboots while downloading instead of starting over.

VSCode SSH remote support

The new AGNOS automatically creates a symlinks that ensures the VSCode remote server is installed in /data, so it just works. See the VSCode documentation for more information on how to use Remote SSH Targets.

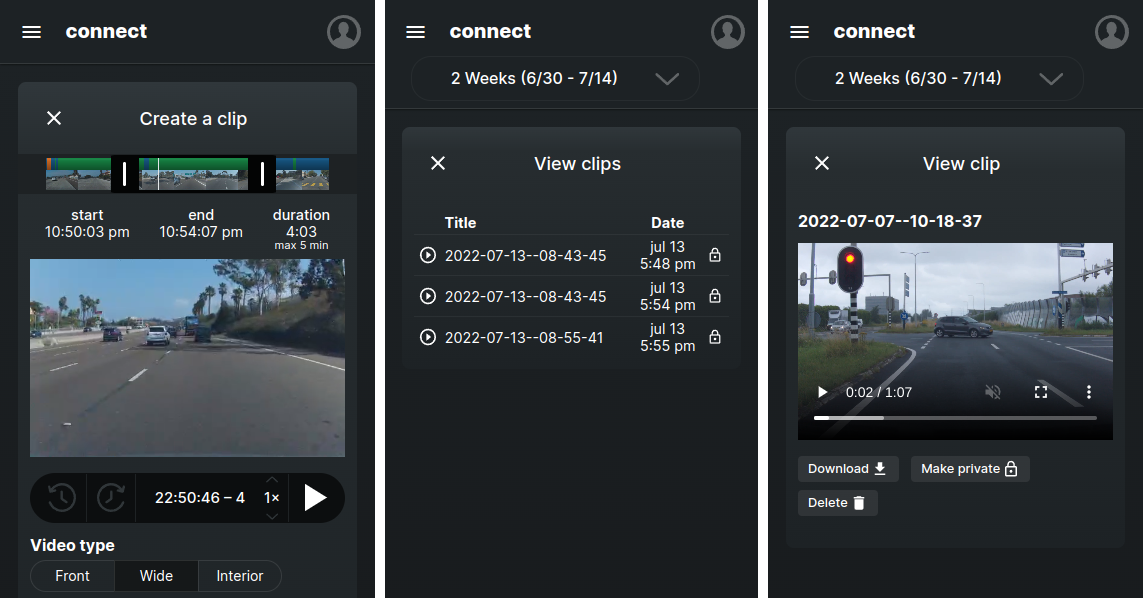

connect

Video clips export

comma connect now features an easy way to export and share video clips from your drives. To export a video clip, click the “create clip” button from the route viewer. Then, you can select a time range and video type to start the export. Your device will first upload the high resolution video files to our server, after which the video will be exported and can be viewed or shared from connect. comma prime subscribers can try this feature now from connect, or you can view an example clip.

Different views in connect for creating a clip, showing all clips, and viewing a exported clip.

MTBF Report

MTBF of immediate disables, i.e. unplanned disengagements, is up 3x in 0.8.14 compared to the previous release. As usual, we’ve fixed a bunch of small bugs on the way to 1000 hours MTBF.

MTBF analysis for openpilot v0.8.14-release

MTBF is the point estimate

MTBF_L is the two-sided 90.0% confidence interval

2185080 segments, 36115 hours, 11557 engaged hours

Immediate disables - 330 hours MTBF

+----+------------------------+---------+----------+---------+-----------+

| | event | MTBF | MTBF_L | count | dongles |

+====+========================+=========+==========+=========+===========+

| 0 | booted onroad | 770.44 | 500.35 | 15 | 8 |

+----+------------------------+---------+----------+---------+-----------+

| 1 | event controlsMismatch | 963.05 | 594.4 | 12 | 5 |

+----+------------------------+---------+----------+---------+-----------+

| 2 | event canError | 1926.11 | 975.87 | 6 | 4 |

+----+------------------------+---------+----------+---------+-----------+

| 3 | event steerUnavailable | 5778.32 | 1835.61 | 2 | 2 |

+----+------------------------+---------+----------+---------+-----------+

Soft disables - 39 hours MTBF

+----+----------------------------+----------+----------+---------+-----------+

| | event | MTBF | MTBF_L | count | dongles |

+====+============================+==========+==========+=========+===========+

| 0 | event steerTempUnavailable | 56.37 | 50.21 | 205 | 81 |

+----+----------------------------+----------+----------+---------+-----------+

| 1 | event commIssue | 462.27 | 330.98 | 25 | 10 |

+----+----------------------------+----------+----------+---------+-----------+

| 2 | event cameraFrameRate | 502.46 | 354.66 | 23 | 8 |

+----+----------------------------+----------+----------+---------+-----------+

| 3 | event commIssueAvgFreq | 502.46 | 354.66 | 23 | 8 |

+----+----------------------------+----------+----------+---------+-----------+

| 4 | event calibrationInvalid | 577.83 | 397.65 | 20 | 8 |

+----+----------------------------+----------+----------+---------+-----------+

| 5 | event cameraMalfunction | 608.24 | 414.53 | 19 | 8 |

+----+----------------------------+----------+----------+---------+-----------+

| 6 | event overheat | 1050.6 | 634.72 | 11 | 11 |

+----+----------------------------+----------+----------+---------+-----------+

| 7 | event vehicleModelInvalid | 1444.58 | 800.62 | 8 | 7 |

+----+----------------------------+----------+----------+---------+-----------+

| 8 | event lowMemory | 1650.95 | 878.96 | 7 | 5 |

+----+----------------------------+----------+----------+---------+-----------+

| 9 | event controlsdLagging | 1926.11 | 975.87 | 6 | 5 |

+----+----------------------------+----------+----------+---------+-----------+

| 10 | event espDisabled | 2889.16 | 1262.54 | 4 | 4 |

+----+----------------------------+----------+----------+---------+-----------+

| 11 | event processNotRunning | 3852.22 | 1490.48 | 3 | 2 |

+----+----------------------------+----------+----------+---------+-----------+

| 12 | event posenetInvalid | 5778.32 | 1835.61 | 2 | 2 |

+----+----------------------------+----------+----------+---------+-----------+

| 13 | event usbError | 5778.32 | 1835.61 | 2 | 1 |

+----+----------------------------+----------+----------+---------+-----------+

| 14 | event radarFault | 11556.6 | 2436.12 | 1 | 1 |

+----+----------------------------+----------+----------+---------+-----------+

Cars

As of this release, openpilot supports 190 cars. This release includes support for three new platforms, the Hyundai CAN-FD platform used on the Kia EV6, the new Honda Bosch platform used on the 2022 Honda Civic, and the Ram DT platform used on the Ram 1500. Support for more models on these platforms should follow soon.

ECU firmware query improvements

We’ve made some important changes to the ECU firmware query that both improve code maintainability and the user experience.

Previously when we wanted to add queries to support new car brands, we needed to test all the other brands to ensure that they wouldn’t respond to the new brand’s queries. This was because openpilot would run all the queries and use the last successful responses for fingerprinting, which could include responses from multiple brand’s queries. Now, openpilot runs each query and tries to fingerprint using only responses from that particular query.

We also sped up the overall fingerprinting time by doing a quick check to determine a few candidates for the car brand. Instead of doing the queries in the same order every time, we then use those candidates to sort the queries (#23311).

Bug Fixes

- Toyota: fix unreliable blindspot monitor detection (#24964)

- Fixed rare race condition that caused a controlsMismatch (commaai/panda#988)

- Chrysler: fix incorrect steering angle signals thanks to realfast! (#24926)

- Honda Bosch longitudinal: fix controls mismatch (#24788)

Enhancements

- Wait until lead car moves to request ACC resume (#24873)

- Chrysler: auto resume ACC from standstill (#24883)

- Chrysler: ECU firmware fingerprinting thanks to realfast! (#24460)

- FPv2: log query request and response address (24733)

- FPv2: log and fingerprint on responses by brand (#25042)

- FPv2: order FW requests by most likely brands (#23311)

- New torque controller used on select Hyundai, Kia, and Toyota models

Car Ports

- Honda Civic 2022 support (#24535)

- Hyundai Tucson 2021 support thanks to bluesforte! (#24791)

- Kia EV6 2022 support (#24485)

- Lexus NX Hybrid 2020 support thanks to AlexandreSato! (#24796)

- Ram 1500 2019-22 support thanks to realfast! (#24878)

Join the team

We’re hiring great engineers to own and work on all parts of the openpilot stack. If anything here interests you, apply for a job or join us on GitHub!

Leave a comment